April is here and you may be thinking about putting together project evaluations or preparing your Trustee Annual Report and considering how you demonstrate the impact of your services.

Now is an ideal time to think about using your Outcomes Stars data. Even if you haven’t been using Outcomes Stars for long and don’t have lots of review Stars, read on… you can still use Star data to evidence the need for your service.

In this article we have gathered some tips and ideas to help you use Star data in your annual reports.

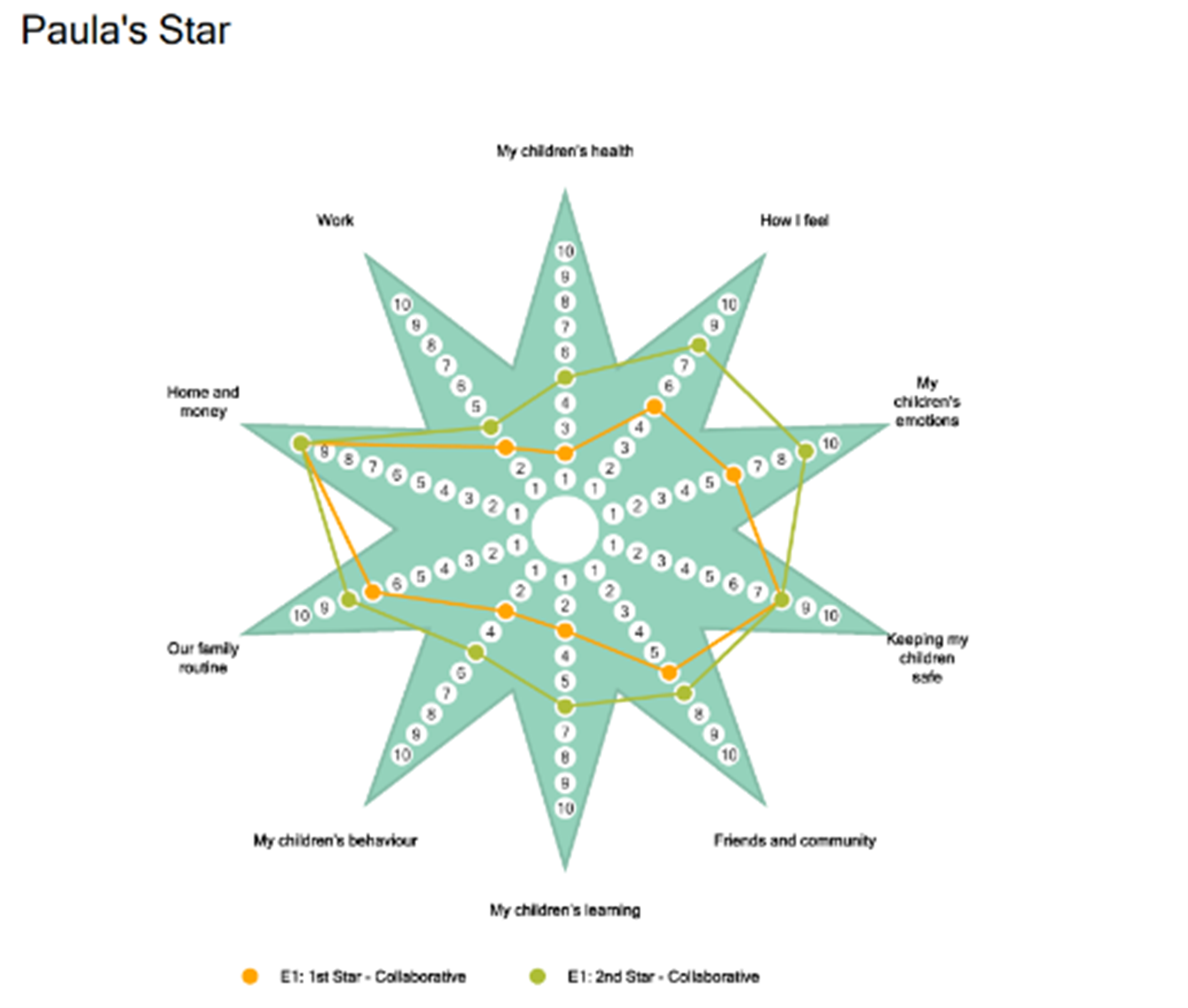

Use Star Charts to bring case studies alive

You probably have lots of stories of amazing personal change achieved by people you support, and already include individual case studies in your annual reporting. You can illustrate this by adding a Star Chart showing someone’s first and last/latest Star readings.

This is a great way to show the changes that have been made. Along with the narrative in your case study, a Star Chart will help you tell the story of how the support provided facilitated progress on the Journey of Change in relevant outcome areas.

If you don’t use Star Online why not use a photo of your hand-plotted Star Chart image?

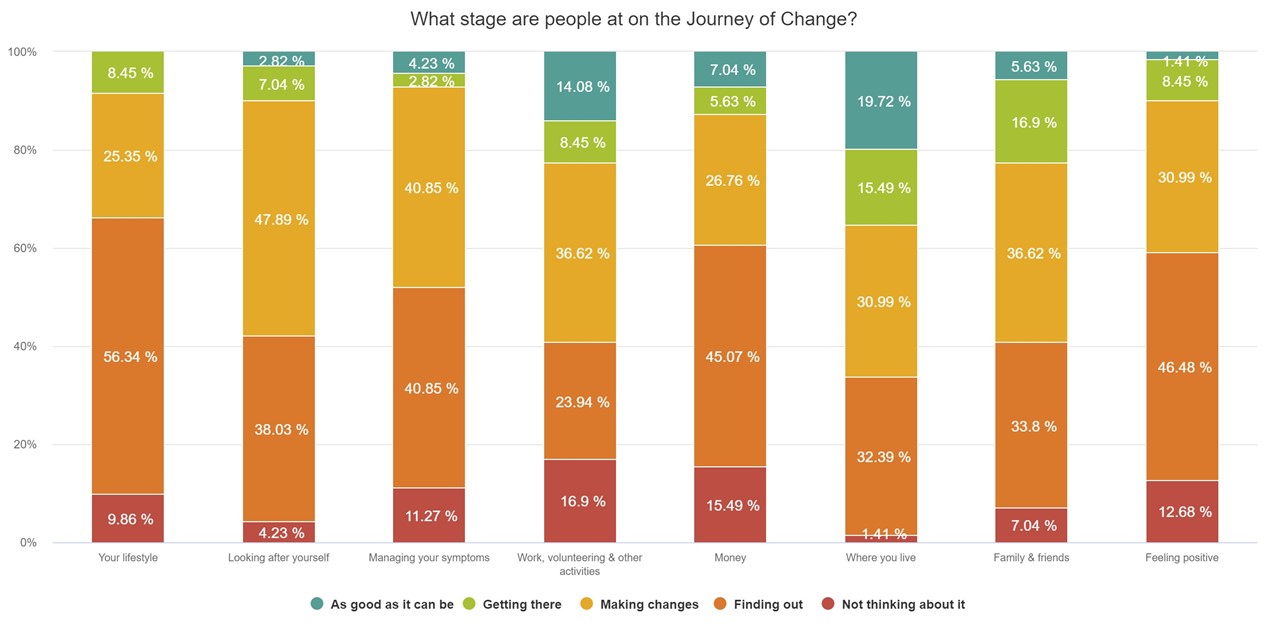

Taking a snapshot view

Snapshot reports look at Outcomes Stars completed across a cohort of people at a particular point in their support. A great way of using a Snapshot report is to look at everyones’ first Stars to see how the readings are distributed across the Journey of Change in each outcome area (by working out the percentage of people with readings at each Stage, in each outcome area). This will give you a profile of the needs people have when they first start support.

Use this information to illustrate why you deliver the kind of interventions that you do – this can show that your service is evidence led and is focusing resources where they are needed most.

Example of how an organisation may use a Snapshot (SS) report:

We use the Wellbeing Star™ to help us identify people’s needs when they start the three-month social prescribing programme. Over 60% of our programme participants are either not yet thinking about or finding out about how they could improve their lifestyle, so our programme focuses on one to one and small group work to help people open up about their lifestyle and consider the impact this might have on their existing health conditions.

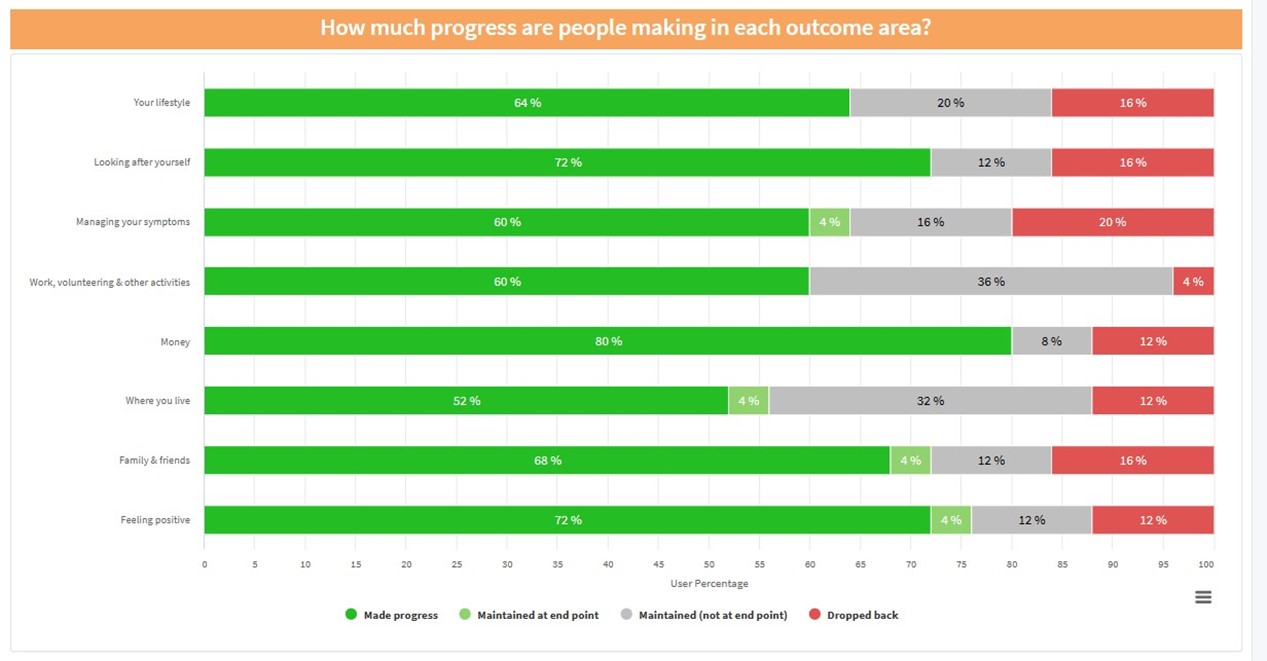

Illustrating the distance travelled

Outcomes Stars are designed to show the progress made by the people you support, and it is really satisfying to be able to collate Star readings to show this progress across a service or organisation. If enough people have completed two or more Stars, readings can be collated for a service or organisation to capture distance travelled and demonstrate the percentage of people that have moved forward, maintained or dropped back over the course of a year.

In your report narrative, you might want discuss progress made across all outcome areas around the Star or you might prefer to focus your narrative on a few areas that your service targets through your interventions, by selecting a few headline figures from the data in the report.

Example of adding narrative to a Distance Travelled (DT) report:

As evidenced by our use of the Well-being Star™, which we complete with participants on our social prescribing programme, 80% of participants made positive change in the area Money, following our successful collaboration with our local CAB debt and benefits service – this is a big improvement from the previous year, where Money was the area in which we saw significant need (people were at the lowest two stages of the Journey of Change scale) and we saw the least positive change.

Explain what your distance-travelled data is showing you, the learnings that have been taken from this and include plans for service delivery going forward based on the findings.

Don’t be afraid to report on people dropping back, maintaining or acknowledging change that isn’t as strong as you would like. Some areas do take longer to make positive change or depend on resources that aren’t available or are out of the control of your service. Reporting on this will show to stakeholders that you understand the data you are getting from Outcomes Stars and that you use it to reflect on your provision and the wider context you work in.

For example:

Although we continue to see less positive change in the outcome area Where you live on the Wellbeing Star™, this is an area where it is often difficult to achieve results within the 3-month programme e.g. referrals to the Council’s aids and adaptation team for an assessment typically take about 10 weeks.

When using Outcomes Stars to evidence your impact, use the correct title of the Star you use and use the ™ to show you are using a recognised evidence-based Outcomes measurement tool.

Further support

Triangle is here to support you to make the most from your Star data. If you need any support or have any questions, get in touch with your main point of contact at Triangle.

We run regular webinars to help you get the most from your Star data and use the Star Online report dashboards. You can book a slot and find out more information about our webinars below.

Share your practice with Triangle

We would love to see examples of where you have used your Outcomes Stars data to evidence your impact or where you have learnt something interesting – please get in touch to share your experience of using Star data.

Dr Anna Good: Dr Anna Good is a Research Analyst at Triangle: a large part of her role involves testing the psychometric properties of the Star, conducting research and supporting organisations to make the best use of Star data. After completing an MSc and a PhD in Psychology with specialisms in behaviour change interventions and psychological research methods, Anna spent a number of years as a post-doctoral researcher, including two years as principal investigator on a prestigious grant examining health behaviour change.

Dr Anna Good: Dr Anna Good is a Research Analyst at Triangle: a large part of her role involves testing the psychometric properties of the Star, conducting research and supporting organisations to make the best use of Star data. After completing an MSc and a PhD in Psychology with specialisms in behaviour change interventions and psychological research methods, Anna spent a number of years as a post-doctoral researcher, including two years as principal investigator on a prestigious grant examining health behaviour change.